Less, in case when we wish to discard or reduce the dimensions in our dataset. The output of PCA are these principal components, the number of which is less than or equal to the number of original variables. Technically, a principal component can be defined as a linear combination of optimally-weighted observed variables. The (i,j)th element is the covariance between i-th and j-th variable. Covariance Matrix: This matrix consists of the covariances between the pairs of variables.Here, v is the eigenvector and ƛ is the eigenvalue associated with it.

It is an eigenvector of a square matrix A, if Av is a scalar multiple of v. Eigenvectors: (get sample code) Eigenvectors and Eigenvalues are in itself a big domain, let’s restrict ourselves to the knowledge of the same which we would require here. So, consider a non-zero vector v.Orthogonal: (get sample code) Uncorrelated to each other, i.e., correlation between any pair of variables is 0.And the modulus value of indicates the strength of relation. Positive indicates that when one variable increases, the other increases as well, while negative indicates the other decreases on increasing the former. The value of the same ranges for -1 to +1. Correlation (get sample code) : It shows how strongly two variable are related to each other.Dimensionality (get sample code): It is the number of random variables in a dataset or simply the number of features, or rather more simply, the number of columns present in your dataset.Image Source: Machine Learning Lectures by Prof. Intuitively, Principal Component Analysis can supply the user with a lower-dimensional picture, a projection or "shadow" of this object when viewed from its most informative viewpoint. So what PCA will do in this case is summarize each wine in the stock with less characteristics. But redundancy will arise because many of them will measure related properties. Each wine is described by its attributes like colour, strength, age, etc. Imagine some wine bottles on a dining table. The results are also sensitive to the relative scaling. As a layman, it is a method of summarizing data. Importantly, the dataset on which PCA technique is to be used must be scaled.

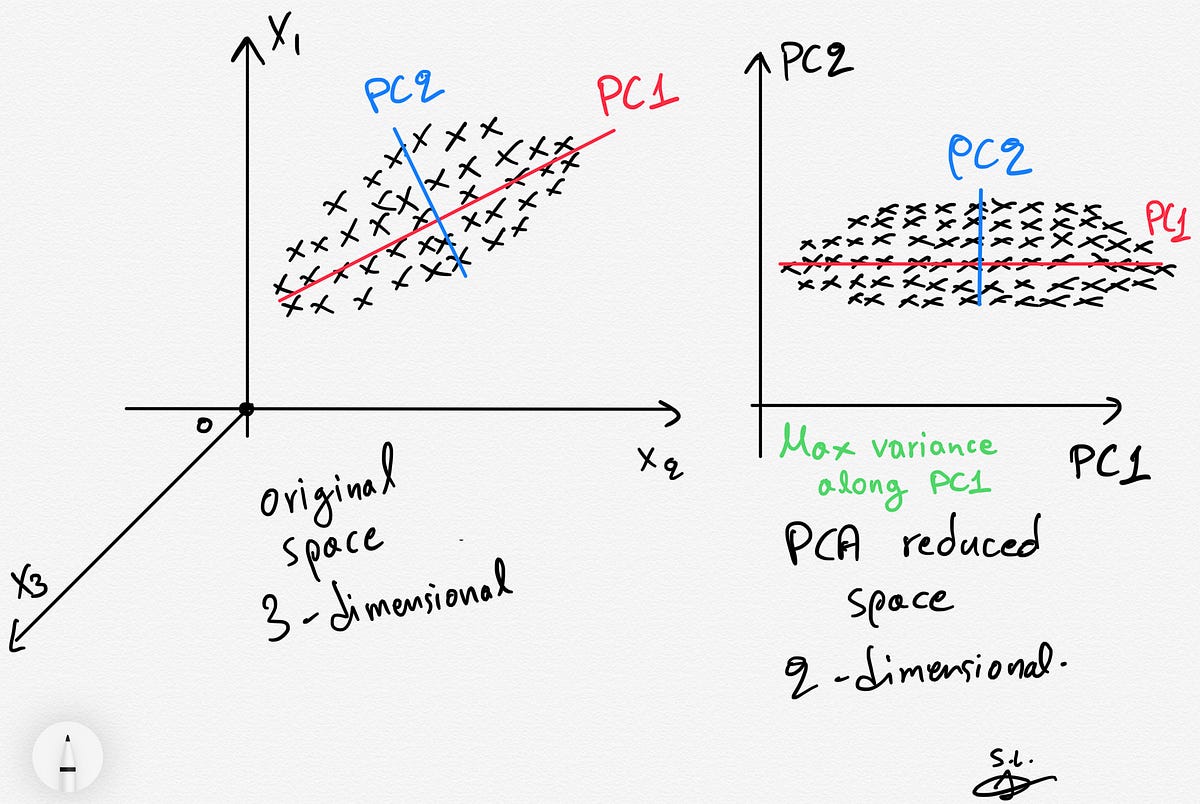

The principal components are the eigenvectors of a covariance matrix, and hence they are orthogonal. So, in this way, the 1st principal component retains maximum variation that was present in the original components. The same is done by transforming the variables to a new set of variables, which are known as the principal components (or simply, the PCs) and are orthogonal, ordered such that the retention of variation present in the original variables decreases as we move down in the order. The main idea of principal component analysis (PCA) is to reduce the dimensionality of a data set consisting of many variables correlated with each other, either heavily or lightly, while retaining the variation present in the dataset, up to the maximum extent.

#Add pca column back to data code

if you need free access to 100+ solved ready-to-use Data Science code snippet examples - Click here to get sample code As you get ready to work on a PCA based project, we thought it will be helpful to give you ready-to-use code snippets.